It seems the possibility that “placebo responses” are getting bigger is newsworthy. A new systematic review just published in the journal PAIN[1] has been receiving a fair amount of attention. The headline finding is that the placebo “response” is growing in clinical trials of analgesic drugs for neuropathic pain. There are further interesting quirks in the data. The increase in placebo “responses” was seen only in trials conducted in the USA. These “responses” were larger as the size of the placebo group increased or the duration of the trial grew longer. In fact the specificity of the phenomenon to USA-based trials seems largely a factor of the larger samples and longer studies conducted there, but not elsewhere.

One suggested implication of this is that it helps to explain why most new drugs for neuropathic pain don’t perform very well in trials. They just can’t out-muscle these pumped-up and ripped placebo “effects”. While this phenomenon was observed in the placebo groups it was not seen in the drug groups. The average “response” seen in groups receiving the actual drugs over time remained stable. On the face of it that’s potentially reason to worry. Could placebo effects be hiding potentially great new treatments for a group of people who currently do not have many?

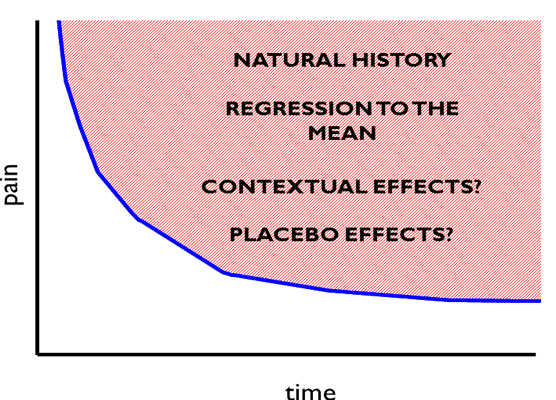

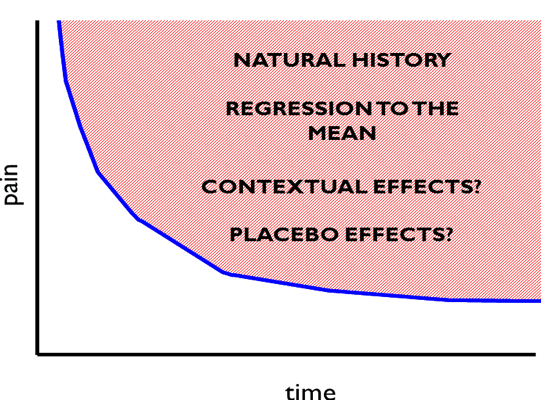

You may have noticed that I seem a bit trigger happy with my quotation marks around the word “response” and “effects”. It is interesting to consider how the use of language in this discussion impacts upon how we see the data. A key phrase being used here is “placebo response”. Intuitively the phrase appears to mean how much of an effect the delivery of a placebo had on that group – i.e. the “effect” of delivering the placebo pills. But this is not what was measured. What was actually measured was the change in pain from baseline to follow-up in the group that received placebo. Steve Kamper has written brilliantly about the problem with that here at BiM before [2]. As the authors of this new review acknowledge, this actually reflects the combined effect of regression to the mean, natural history, various contextual influences and the specific “placebo” effect (see Figure 1), if indeed there is one. Regression to the mean is simply an artefact to be controlled for, and it is clearly a stretch to wrap up that or natural history (what would have happened to the symptoms over time regardless of intervention) into the concept of “response” or patient benefit. Within the placebo group, or within individuals in that group we have no way of divining the actual effect of giving the placebo. So rather than “placebo response” we are really measuring is “what happened on average to those people who were randomised to get a placebo”. This is a measure of outcome, not just, or perhaps sometimes not at all a measure of effect or response. If you think this is an exercise in pointy-headed pedantry I hope to convince you it is not.

If we take the common interpretation of these data and view this as an issue of placebo “response”, it becomes easy to frame the argument as a possible excuse for disappointing drug treatments. We observe this odd phenomena whereby the mysterious and much talked about (and hyped) placebo effect appears to be growing and this effect is being sustained beyond what we might expect, raising the bar that new drugs must clear to a challenging level. Explaining this becomes tricky, though the authors of the review offer some interesting theoretical speculation. For example perhaps the analgesia of an early placebo effect reinforces expectations and predictions of pain relief and sustains the placebo effect in a positive feedback loop; perhaps there are late onset mechanisms that underpin sustained placebo responses; perhaps the prolonged engagement with trial staff in longer trials keeps the response going; perhaps the study marketers and recruiters are getting better at raising expectations.

But if instead we frame this simply that the “outcomes” in the groups receiving placebo in trials are better in more recent trials then the findings potentially become easier to understand. Larger trials should be more representative of the population of interest and less selective in recruitment. Maybe publication biases were more pronounced in earlier time periods, favouring trials where outcomes were poorer in the placebo group (and treatment effects were therefore larger). Following more people over a longer time offers a more accurate view of natural history than fewer people over a shorter time. If we take this view then rather than being presented with the problem of expanding placebo responses underestimating the true effectiveness of drugs, the picture shifts to a situation where more recent trials correct the problem of earlier trials exaggerating the effectiveness of those drugs by systematically underestimating outcomes in groups that do not receive them. The decline effect is a well observed phenomena [3] across medical research and beyond – early trials generally demonstrate larger and more positive effects that decline as more, larger, and hopefully better studies are conducted. This interpretation fits more neatly with what we see when we consider the size of placebo effects in studies that are designed to specifically measure them – that is studies which compare a placebo group to a no treatment group. Those studies generally show us that placebo effects are small, a bit unreliable and generally short-lived [4].

This is an interesting and well conducted review and the data are important in helping us to explain the bigger picture regarding medications for neuropathic pain. But using the term “placebo responses” to label something that represents much more impacts upon the way we interpret the results. Arguably the big challenge presented by the data in this review is not the size of the placebo “responses” but the stagnation seen in the outcomes from the groups receiving the drugs. The benefit offered by our drugs is smaller than we thought and does not seem to be getting any better any time soon. Not quite as likely to make the news, but perhaps far more newsworthy.

*Huge thanks to David Colquhoun for his comments and suggestions on an earlier draft of this blog. If you are interested in some of the stats underlying the exaggeration of effects sizes by small studies read this great paper by him: http://rsos.royalsocietypublishing.org/content/1/3/140216

Neil O’Connell

As well as writing for Body in Mind, Dr Neil O’Connell, (PhD, not MD) is a lecturer and researcher in the College of Health and Life Sciences (Department of Clinical Sciences) at Brunel University London, UK. He divides his time between research and training new physiotherapists and previously worked extensively as a musculoskeletal physiotherapist.

As well as writing for Body in Mind, Dr Neil O’Connell, (PhD, not MD) is a lecturer and researcher in the College of Health and Life Sciences (Department of Clinical Sciences) at Brunel University London, UK. He divides his time between research and training new physiotherapists and previously worked extensively as a musculoskeletal physiotherapist.

He also tweets! @NeilOConnell

Neil’s main research interests are chronic low back pain and chronic pain more broadly with a focus on evidence based practice. He has conducted numerous systematic reviews and is a member of the editorial board of the Cochrane Collaboration’s Pain Palliative and Supportive Care Group (PaPaS). He also makes a mean Yorkshire pudding despite being a child of Essex. Link to Neil’s published research here. Downloadable PDFs here.

References

- Tuttle AH, Tohyama S, Ramsay T, Kimmelman J, Schweinhardt P, Bennett GJ, Mogil JS. Increasing placebo responses over time in U.S. clinical trials of neuropathic pain. Pain 2015; [Epub ahead of print]

- Kamper S Putting the placebo out to pasture. Body in Mind November 8, 2012

- Ioannidis JP. Why most discovered true associations are inflated. Epidemiology. 2008; 19:5:640-8. doi: 10.1097/EDE.0b013e31818131e7.

- Hróbjartsson A1, Gøtzsche PC. Placebo interventions for all clinical conditions. Cochrane Database Syst Rev 2010;(1):CD003974. doi: 10.1002/14651858.CD003974.pub3.