Editor’s note: The North American Pain School (NAPS) took place 19-24 June 2022, in Montebello, Québec City, Canada. NAPS – an educational initiative of the International Association for the Study of Pain (IASP) and Analgesic, Anesthetic, and Addiction Clinical Trial Translations, Innovations, Opportunities, and Networks (ACTTION), and presented by the Quebec Pain Research Network (QPRN) – brings together leading experts in pain research and management to provide trainees with scientific education, professional development, and networking experiences. The theme was, “Controversies in Pain Research.” Five of the trainees were also selected to serve as PRF-NAPS Correspondents, who provided firsthand reporting from the event, including interviews with NAPS’ Visiting Faculty members and Patient Partners, summaries of scientific sessions, and coverage on social media. Here, PRF-NAPS Correspondent Don Daniel Ocay, PhD, a postdoctoral fellow at Boston Children’s Hospital, US, provides coverage of a talk by NAPS Visiting Faculty member Sean Mackey, MD PhD, Stanford University Medical Center, California, US.

On the final morning of the 2022 North American Pain School, Visiting Faculty member Sean Mackey gave a talk on the controversial topic, “Can Real-World Data Replace Randomized Controlled Trials?”

A vision for the future

Mackey began the session by sharing his vision for the future – imagine a world where clinicians have access to high-quality data, state-of-the-art technology to inform best practices of treatment, and where researchers are working with real-world patients’ data to generalize their findings to the population.

Better data are needed to understand problems and guide action, and no individual clinician can explain the complexity of pain. We need data systems that will help compile all biopsychosocial information from patients to inform and augment clinical care. Mackey and his team have developed an open-source learning health system called CHOIR, or the Collaborative Health Outcomes Information Registry. The purpose of a continuously evolving learning health system, as defined by the National Academy of Medicine, is to bring scientists, clinicians, policy makers, administrators, and patients together to collect data – in real-time – for point-of-care decision-making and longitudinal tracking of patients.

Why is this important?

Mackey highlighted the increased emphasis on rigor and reproducibility in scientific research, and how such data, collected in real time, could help to answer the fundamental questions that keep most clinicians awake at night: How do you know the best treatment for a patient, and how do you know if you’ve made your patient better? However, this raises another question – what does “better” represent? It may not necessarily mean a decrease in pain intensity.

A faulty gold standard?

Randomized controlled trials (RCTs) have been put forward as the gold standard by the “father” of evidence-based medicine, David Sackett. In a 1996 editorial, Sackett wrote, “Because the randomized trial, and especially the systematic review of several randomized trials, is so much more likely to inform us and so much less likely to mislead us, it has become the ‘gold standard’ for judging whether a treatment does more good than harm.”

What are the strengths of RCTs? They are highly controlled (i.e., reduce bias), include well-defined patients, and infer causality and direction, to name a few. However, with strengths there are also limitations. RCTs may not be generalizable, they are costly and conducted for their own interest (e.g., for pharmaceutical companies), they can have ethical implications for randomization, and they have problems with blinding and attrition (i.e., dropouts). In addition, the primary outcomes of RCTs shape their story, such that the treatment or therapeutic intervention may fail for the primary outcome but improve secondary outcomes.

Mackey highlighted three challenges that clinical research faces:

1. It may not be relevant to clinical practice (i.e., lacks external validity). About 10% of people with chronic pain qualify for clinical trials; therefore, we cannot generalize results. Mackey illustrated this problem using one of his team’s publications investigating brain gray matter density from MRI scans of patients with chronic low back pain (Ung et al., 2014). The patient population had no increased mood severity, no radicular symptoms, and were not taking medications. Therefore, none of those patients were representative of those seen in clinics and academic centers. In 2018, Angus Deaton and Nancy Cartwright argued that RCTs, when properly designed, can provide an unbiased result, but not a precise result (Deaton and Cartwright, 2018). Furthermore, the misapplication of findings from RCTs can have a costly impact on patients and the economy.

2. Most clinical research is slow. Mackey stated that after an average of 17 years, only 14% of research findings will lead to widespread changes.

3. The evidence paradox. There are about 18,000 RCTs published every year.

The solution?

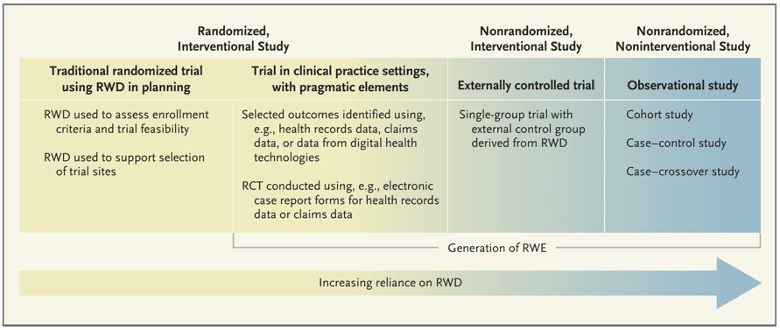

Mackey argued that we need a more practical and integrated approach (i.e., we need more real-world data research). The U.S. Food & Drug Administration, as part of the 21st Century Cures Act, has now included real-world data and real-world evidence into the decision-making of approval for devices and drugs.

Mackey believes we need to move beyond traditional RCTs and start integrating real-world data from well-controlled observational trials and pragmatic trials. Mackey reinforced this position using examples from two CHOIR studies.

In 2019, an observational trial was published investigating the effects of smoking in more than 8,000 patients with chronic pain (Khan et al., 2019). In reference to RCT limitations, specifically the ethical implications of randomization, we cannot randomize people to smoke. Therefore, in an attempt to replicate an RCT, propensity analysis can be used in retrospective real-world data. In this study, the characteristics of the smokers were taken, and then they determined the contributing factors which were matched to a control group. Smokers’ outcomes were worse when coming to a clinic, and stay worse after multidisciplinary treatment.

Mackey’s team did a similar study investigating the effects of cannabis in more than 7,000 patients with chronic pain and found similar results – cannabis users’ outcomes were poorer coming into a clinic and remained so (Sturgeon et al., 2020). However, the main limitation of these two studies is that causality cannot be obtained with this analysis model. Propensity analysis is based on matching the observed covariates. What about the unobserved ones? They can’t be matched because we don’t know what they are.

Pragmatic trials and RCTs: Each have a role and a place

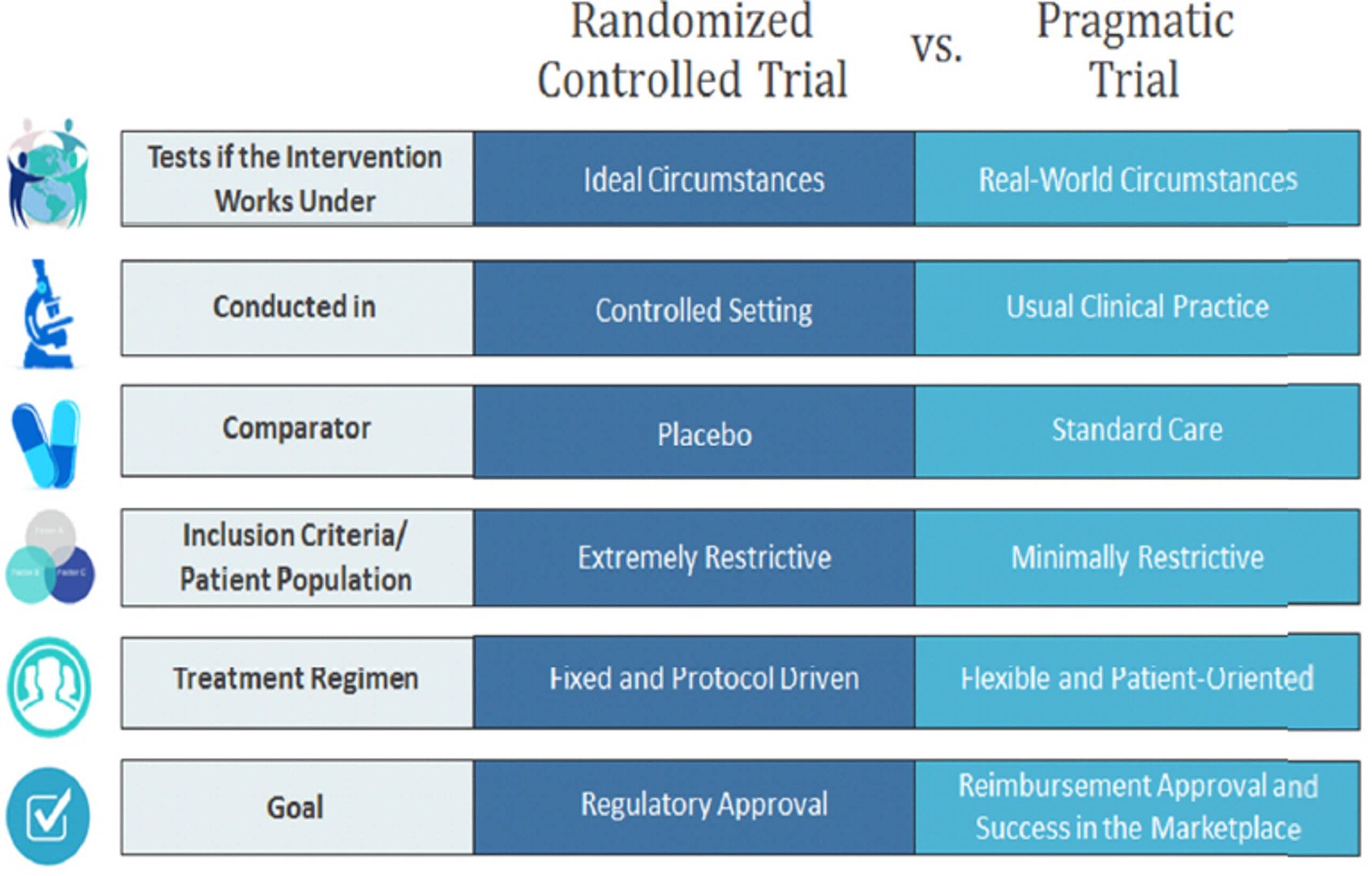

Mackey went on to discuss the differences between pragmatic trials and RCTs. Typically, RCTs are conducted in controlled settings, whereas pragmatic trials are done in usual care. There are no comparators for pragmatic trials, whereas a placebo is used for RCTs. Also, pragmatic trials usually have looser inclusion criteria and different goals.

Mackey then brought up a controversial editorial from 2005 by Ernst and Canter which stated that “while pragmatic trials may approximate more closely to the day-to-day clinical situation in which patients are treated, the results they produce are frequently next to meaningless.” There are limitations to pragmatic trials such as:

1. The quality of the data. Different clinical environments may have modifications in their electronic medical records leading to different data input.

2. The findings may be overgeneralized. For example, a national study may only represent the four states conducting the study in their centers.

3. An efficacy trial cannot be conducted with a pragmatic trial.

Mackey’s take-home message regarding pragmatic trials and RCTs? It is not that one is better than the other. Each has its own role and place. It is a question of choosing the right study design for the question you are trying to ask. They each have strengths and weaknesses. There is no gold standard. Mackey pointed out that although David Sackett may have gotten it wrong about RCTs, he beautifully defined evidence-based medicine as “integrating individual clinical expertise with … the best available external clinical evidence from systematic research.” Mackey believes that this should be extended to obtain viewpoints from Patient Partners. While concluding, Mackey urged everyone in attendance to remember that this is all about the person we are taking care of. We ultimately want to improve the lives of patients.

Don Daniel Ocay, PhD, is a postdoctoral fellow at Boston Children Hospital, US. You can follow him on Twitter – @DonOcay