When a person feels disgust, fear, or pain, there’s a good chance an observer can identify those emotions from the facial expressions of the individual they are watching. But what about animals like mice – do they make recognizable facial expressions in response to stimuli that evoke strong emotions? And if they do, can humans make any sense of those expressions at all? A new study says yes on both counts.

Research led by Nadine Gogolla, Max Planck Institute of Neurobiology, Martinsried, Germany, now reveals that, in response to emotionally salient events, mice ─ just like people ─ make stereotypical facial expressions, and these expressions could be identified and classified by a machine learning algorithm. The facial expressions were indicative of disgust, pleasure, pain, malaise, or fear. Further, the investigators used calcium imaging to uncover the neural basis of these findings and discovered that activity of “face neurons” in the insular cortex was associated with different facial expressions.

“This is a great study, because the authors did not stop after they developed the algorithm that identified the facial features in response to specific emotionally linked stimuli, but rather went deeper to gain some mechanistic insight by using two-photon imaging,” said Jeffrey Mogil, McGill University, Montreal, Canada, who was not involved with the new research.

“[First author Nejc] Dolensek et al. provide an objective analysis tool that is essential to be able to understand the neurobiological mechanisms of emotions, to identify species-specific emotions, and to identify their variability across individuals,” wrote Benoit Girard and Camilla Bollene, University of Geneva, Switzerland, in an accompanying Perspective.

The research and Perspective were published April 3, 2020, in Science.

Facial expressions reveal distinct emotion events

The path to the new study was not planned, according to Dolensek, a PhD student at Max Planck Institute of Neurobiology.

“I have to admit that it is a bit random how the story came about. I first was focused on using two-photon imaging of the insular cortex and soon realized I needed something to correlate the neuronal activity to, so I recorded pupillary responses to a variety of stimuli. It was not until I zoomed out of the image a bit further that I noticed that the mice had responded with very slight but distinct facial features to the different stimuli,” Dolensek explained.

To see if mice exhibited characteristic facial expressions to different emotionally salient stimuli, as people do, Dolensek and colleagues exposed the animals to a diverse set of sensory stimuli assumed to trigger changes in the emotional state of the animals. This included tail shocks, a sucrose solution, a quinine solution, lithium chloride, escape behaviors, and freezing behaviors. The group monitored the facial expressions of these head-fixed animals by using a video camera, both at baseline and in response to the stimuli.

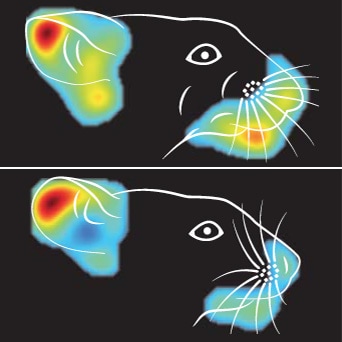

The team found that the animals responded to the various stimuli with observable facial movements. But it was difficult for the investigators to interpret what those movements meant to the animals – what emotion event underlay them. So they used a machine learning algorithm to learn more.

They saw that their learning algorithm could cluster and classify the facial expressions into distinct emotional events resulting from the various stimuli. These included pain (in response to the tail shock) pleasure (from sucrose), disgust (from quinine), malaise (from lithium chloride), active fear (escape behavior), and passive fear (freezing behavior). In fact, the algorithm could predict the underlying emotion event solely from facial expressions across different mice, with more than 90% accuracy.

The group also showed the specificity of the facial expressions to the emotional event that the different stimuli caused. That is, as just one example, facial expressions of pain in response to tail shock were different from facial expressions seen in response to the other stimuli. The only exception was the facial expression of active fear, which also resembled the facial expressions evoked by pain and disgust, and may thus capture features of a number of different emotion states.

Interestingly, the facial expressions elicited by tail shock, the painful stimulus, differed slightly from what had been previously shown by Jeffrey Mogil, Mark Zylka, and others (Langford et al., 2010Tuttle et al., 2018Abdus-Saboor et al., 2019PRF related news), where a hallmark of the facial response to pain was orbital tightening (or squinting), which was absent in the current study.

Reflexes or something more fundamental?

The researchers next asked whether the facial expressions they observed were indicative of fundamental features of emotions, or were merely reflex-like reactions. The features they tested included the intensity of the emotion; valence (whether the animal experiences an emotion as good or bad); generalization of the emotion among different sensory experiences; flexibility of the emotion depending upon the animal’s internal state; and persistence of the emotion. The results showed that the facial expressions indeed reflected such core aspects of emotions.

For instance, when the group varied the strength of a stimulus, such as increasing the strength of the tail shocks or the concentration of sucrose or quinine, the facial expressions of the animals became more intense. To test valence, when the authors used salt at low concentrations as a stimulus, they found that it elicited facial expressions indicative of pleasure and only weak similarities to expressions of disgust. But they saw the inverse with high salt concentrations: expressions of disgust rather than pleasure. This is most likely because salt is appetitive to mice at low concentrations and aversive at high concentrations. These data showed that the facial expressions could be decoupled from the underlying stimulus and generalized across different sensory experiences.

With regard to flexibility of the emotions, the authors manipulated the internal state of the animals without varying the stimulus and observed what effect that had on the facial expressions. In particular, mice showed stronger expressions of pleasure in response to water or to sucrose when they were thirsty compared to when they were not.

The researchers also wanted to test how learning might affect the facial expressions. Here, they exposed mice to a sucrose solution and then injected the animals with lithium chloride to produce a conditioned taste aversion. Before this manipulation, the animals’ facial expressions were indicative of pleasure in response to sucrose. But after this learning paradigm, when the animals now associated sucrose with the malaise that lithium chloride causes, the sugar elicited facial expressions of disgust.

Finally, since emotions reflect one’s internal state, one would expect that facial expressions would vary even with the same repeated stimulus. This turned out to be the case; whether in the same mouse or across different animals, facial expressions varied in terms of how strong they were, when the expressions first emerged, and how long they lasted, in response to the same repeated stimulus. Facial expressions could also disappear and come back spontaneously.

For instance, while the mice’s facial expressions changed immediately (within five seconds) in response to most (about 90%) of the stimuli, many facial expression changes occurred more than five seconds after the stimuli were applied. Likewise, there was great variability in how long the expressions lasted. Roughly 60% of facial expressions lasted for less than five seconds, about 23% lasted for five to 15 seconds, and approximately 17% lasted beyond 15 seconds.

“Face neurons” in the insular cortex

To understand the underlying neural basis for the facial expressions, the researchers turned to optogenetics. In particular, by activating neurons in different brain areas with light, they could test if they could evoke different facial expressions. In this regard, one area of interest was the ventral pallidum, which processes the reward experienced from pleasant stimuli.

“I was really surprised how well the optogenetic experiments worked,” said Dolensek. “The optogenetic activation of the ventral pallidum caused the animals to exhibit pleasure-like facial features similar to what we had seen when the animals consumed the sugar water.”

Light activation of neurons in the insular cortex, a brain region critical for emotion and behavior, also resulted in robust facial expressions. And, the machine learning algorithm successfully classified these evoked facial expressions.

Next, the researchers applied the different stimuli while using videography to record facial expressions, and two-photon calcium imaging to identify neurons in the posterior insular cortex activated by specific facial expressions.

“Using this combined technology, we identified neurons that exhibited strong correlations to the facial expression dynamics while only demonstrating low correlations with the actual external stimuli. We termed these neurons ‘face neurons,’" explained Dolensek.

Importantly, the activity of the face neurons reflected specific features of the facial expressions, including the duration of the expressions as well as their spontaneous nature. And most face neurons responded to just one single emotion, with little overlap of these neurons.

In sum, the authors linked facial expressions to specific emotions in mice, and showed the existence of face neurons in the insular cortex, whose activity reflects those emotions. But the study does have an important limitation, according to Mogil.

“The only criticism I have is that the authors claim that the facial expressions identified here are generalizable to other stimuli, when the authors have really only investigated one stimulus for each emotional state,” Mogil explained.

“It would be great to determine if, for example, the tail shock facial expression is the same as a painful facial expression elicited by another, longer-lasting pain stimulus such as acetic acid, which may be more generalizable,” Mogil continued. “With that we could determine if there possibly are multiple pain faces for different stimuli, which I predict may be the case since we and others have shown that orbital tightening in the grimace scale is a clear component of the pain face.”

Other questions remain. For instance, why do mice bother to show facial expressions at all, in the first place? The authors note that emotions are often considered particularly important for social interactions, but in this case the mice were not socially interacting with their conspecifics; perhaps facial expressions set the animals on a path to taking action with motor and other behaviors, the researchers speculate.

As for Dolensek, “what is really interesting to me is the link between brain and body, as it has been shown that when you inhibit facial expressions in humans, the subjects have trouble feeling the associated emotion. This link is something I would like to investigate further.”

Francie Moehring is a freelance writer based in Milwaukee, US.